Back in September, I wrote a piece called, “Only 1 Step from Autonomous Warfare.” It was a moral analysis of a semi-autonomous killing of a top Iranian scientist. That semi-autonomous weapon system used facial recognition. Since then, I’ve found out about an even more autonomous weapon killing. The moral analysis is similar, but I think it’s worth mentioning this one separately.

Autonomous Warfare in the Libyan Civil War

Popular Mechanics reported in November:

Sometime around March 2020, this longstanding trope of science fiction—autonomous attack drones eliminating human beings on the futuristic battlefield—crossed over into science fact. That’s when, during the Second Libyan Civil War, the interim Libyan government attacked forces from the rival Haftar Affiliated Forces (HAF) with Turkish-made Kargu-2 (“Hawk 2”) drones, marking the first reported time autonomous hunter killer drones targeted human beings in a conflict…

Unmanned combat aerial vehicles, loitering munitions, and the Kargu-2 “hunted down and remotely engaged” HAF logistics convoys and retreating fighters, the UN report found. The autonomous drones were programmed to attack targets “without requiring data connectivity between the operator and munition,” meaning they located and attacked HAF forces independent of any kind of pilot or control scheme.

All these drones require is location coordinates, and a type of target to shoot at. If given those two things, it will go out and destroy vehicles or kill people without further human input. This is autonomous warfare. This is a shocking and unfortunate development in warfare.

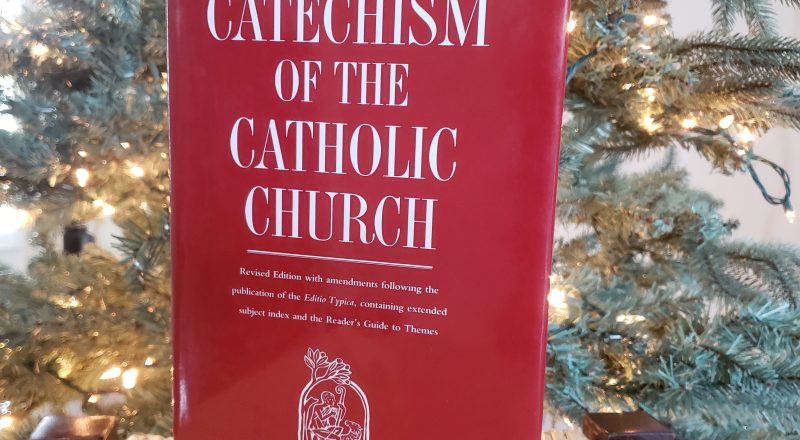

Moral Analysis of Autonomous Warfare

Vatican UN observer on Autonomous Warfare

The Vatican’s UN observer objected to autonomous warfare in August 2021. Crux wrote a summary of their statement:

The Vatican said Aug. 3 that a potential challenge was “the use of swarms of ‘kamikaze’ mini drones” and other advanced weaponry that utilize artificial intelligence in its targeting and attack modes. LAWS [Lethal Autonomouns Weapons Systems], the Vatican said, “raise potential serious implications for peace and stability.” […]

LAWS can “irreversibly alter the nature of warfare, detaching it further from human agency.” […]

“Many organizations and associations and government leaders would agree on the same thing, that life and death decisions cannot be outsourced to a machine. The Holy See has said it as well as anybody,” said Jonathan Frerichs, Pax Christi International’s U.N representative for disarmament in Geneva.

He told Catholic News Service it is unethical to believe “that we could pretend our future can be served by life and death decisions that can be turned over to an algorithm.”

My Piece in September

Let’s also with what I wrote in September on this:

Weapons that can be more precise are beneficial as they reduce collateral death and injury to innocent people. It’s far better if, in warfare, you can just blow up an enemy base and not the school a block away as well. This has become even more important with things like seeking out terrorists than when fighting national armies. [AI or various methods of semi-autonomous operation] can help with this, especially when dealing with a remote weapon that would need to follow a target and would only get a signal a second after the human approved firing the gun. However, we need to be aware of the issues of privacy with facial recognition, possible errors with facial recognition, and the significant moral issues with the completely autonomous warfare this seems directed towards.

Autonomous Warfare Should Be Banned

This new development in Libya is taking such warfare to levels where it seems unethical to ever use these weapons. In just war theory, one needs to be just both in the reason for the war (ad bellum) and in how the war is conducted (in bello). These weapons seem to fall in the category where they cannot justly ever be used and those should be banned. We should add them to the Geneva Convention. I recognize a global treaty offers no guarantees: at least makes it clear that anyone using these is the bad guy.

There can be legitimate fears of this reaching some level where the machines completely take over and fight the humans. That is clearly wrong as many works of science fiction have shown us.

I think even in their current form, there are significant enough concerns to make them unethical. Part of just war is doing the best to minimize civil casualties. It also involves not killing those who surrender and killing only as many combatants as needed to achieve the goal. Having a human being hitting the switch on each death helps to do this. Even if the AI identifies threats and a human presses a “Kill” button, we still add in the human element. Making death a complete calculation without a human element makes it far too easy for military leaders to kill beyond what is necessary, It could also lead to having insufficient oversight to avoid killing those who were surrendering.

I think the Vatican is right and more of us should support them. We need many voices calling out to stop this dystopian idea from gaining traction.

Update: Human Rights Watch Article

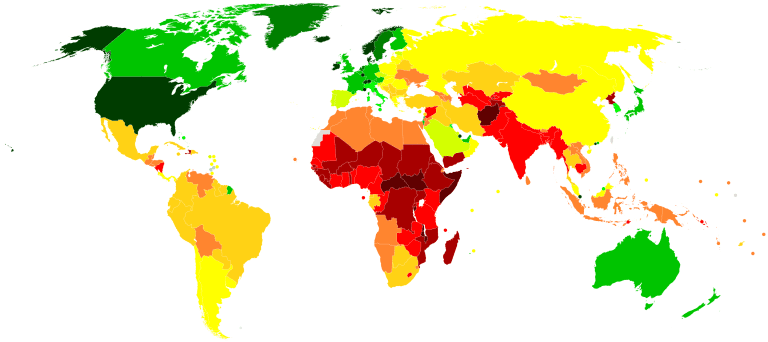

Posting this in a Facebook group, someone pointed me to an article on the Human Rights Watch site. (Note: HRW is totally different from HR Campaign which is an LGBTQ+ activist group. HRW is generally commendable, but not perfect.) They note 97 countries have spoken on the topic of autonomous killer robots. They note, “The vast majority of countries that have spoken to date regard human decision-making, control, or judgment as critical to the acceptability and legality of weapons systems.” It then lists every country. I’ll note the three most of my readers live in. You may want to ask your government about this.

- USA: ” The US is investing heavily in military applications of artificial intelligence and developing air, land, and sea-based autonomous weapons systems. In August 2019, the US warned against stigmatizing lethal autonomous weapons systems because, it said, they ‘can have military and humanitarian benefits.’The US regards proposals to negotiate a new international treaty on such weapons systems as “premature” and argues that existing international humanitarian law is adequate.”

- Canada: “In December 2019, Prime Minister Justin Trudeau instructed his Minister of Foreign Affairs, François-Philippe Champagne, to advance international efforts to ban fully autonomous weapons systems.”

- UK: “The UK said in November 2017 that ‘there must always be human oversight and authority in the decision to strike’ and said that responsibility lies ‘with the commanders and operators.’ The UK is developing various weapons systems with autonomous functions.”

[…] we should make sure that machines don’t have control over human lives. I noted this about autonomous weapons recently. An algorithm determining which ads I see on my social media feed is far from that. We do […]